Over the last couple of years, much has been written about the security of Docker containers, with most of the analysis focusing on the comparison between containers and virtual machines.

Given the similar use cases addressed by virtual machines and containers, this is a natural comparison to make, however, I prefer to see the two technologies as complementary. Indeed a large proportion of containers that are deployed today are run inside virtual machines, this is especially true of public cloud deployments such as Amazon’s EC2 Container Service (ECS) and Google Container Engine (GKE).

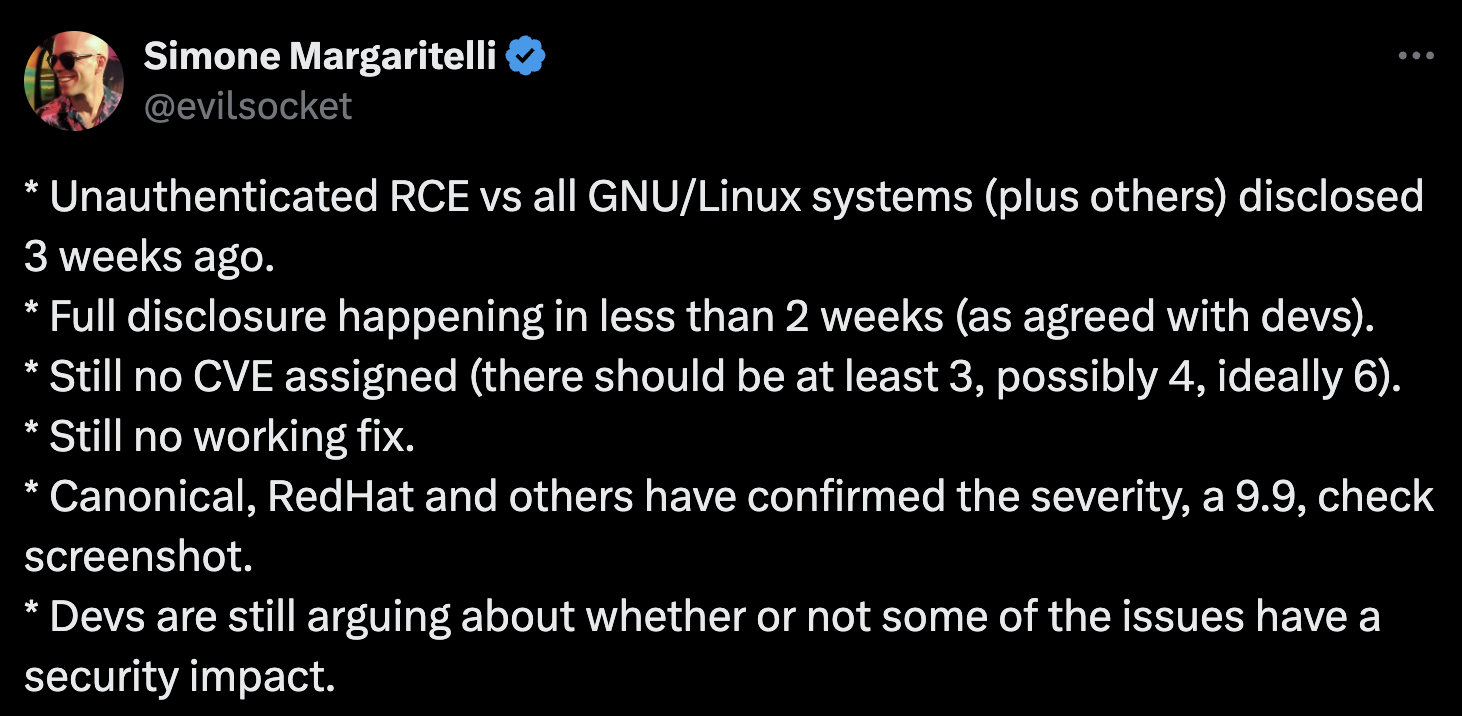

While we have seen a number of significant enhancements made to container runtimes to improve isolation, containers will continue to offer less isolation than traditional virtual machines for the foreseeable future. This is due to the fact that in the container model each container shares the same host kernel so if a security exploit or some kernel-related bug is triggered from a container then the host system and all running containers on that host are potentially at risk.

For use cases where absolute isolation is required, for example where an image may come from an untrusted source, virtual machines are the obvious solution. For this reason, multi-tenant systems such as public clouds and private Infrastructure as a Service (IaaS) platforms will tend to use virtual machines.

In a single-tenant use case such as enterprise IT infrastructure where the deployment and production pipeline can be designed and controlled with security in mind, containers offer a lightweight and simple mechanism for isolating workloads. This is the use case where we have seen exponential growth of container deployments. We are starting to see crossover technologies such as Intel’s Clear Containers that allow containers to be run in lightweight virtual machines allowing the user to provide stronger isolation for a specific container when deemed necessary.

Within the last year or so we have seen container isolation techniques improve considerably through the use of features of the Linux kernel such as Namespaces, seccomp, cgroups, SELinux and AppArmor.

Recently Joerg Fritsch from Gartner published a research note and blog where he made the following statement:

“Applications deployed in containers are more secure than applications deployed on the bare OS”.

Following on from this note Nathan McCauley from Docker wrote a blog that dug further into this topic and referenced NCC group’s excellent white paper on Hardening Linux Containers.

The high-level message here is that “you are safer if you run all your apps in containers”. More specifically the idea is to take applications that you would normally run on ‘bare metal’ and deploy them as containers on bare metal. Using this approach you would add a number of extra layers of protection around these applications reducing the attack surface, so in the case of a successful exploit against the application, the damage would be limited to the container reducing potential exposure to the other applications running on that bare metal system.

While I would agree with this recommendation there are, as always, a number of caveats to consider. The most important of which relates to the contents of the container.

When you deploy a container you are not just deploying an application binary in a convenient packaging format, you are often deploying an image that contains an operating system runtime, shared libraries, and potentially some middleware that supports the application.

In our experience, a large proportion of end-users build their containers based on full operating system base images that often include hundreds of packages and thousands of files. While deploying your application within a container will provide extra levels of isolation and security you must ensure that the container is both well constructed and well maintained. In the traditional deployment model, all applications use a common set of shared libraries so, for example, when the runtime C library glibc on the host is updated all the applications on that system now use the new library. However in the container model, each container will include it’s own runtime libraries which will need to be updated. In addition, you may find that these containers include more libraries and binaries that are required - for example does an nginx container need the mount binary?

As always, nothing comes without a cost. Each application you containerize needs to be maintained and monitored, but it’s clear that the advantages in terms of security and agility provided by Docker and containers in general far outweigh some of the administrative overhead which can be addressed with the appropriate policies and tooling, which is where Anchore can help.

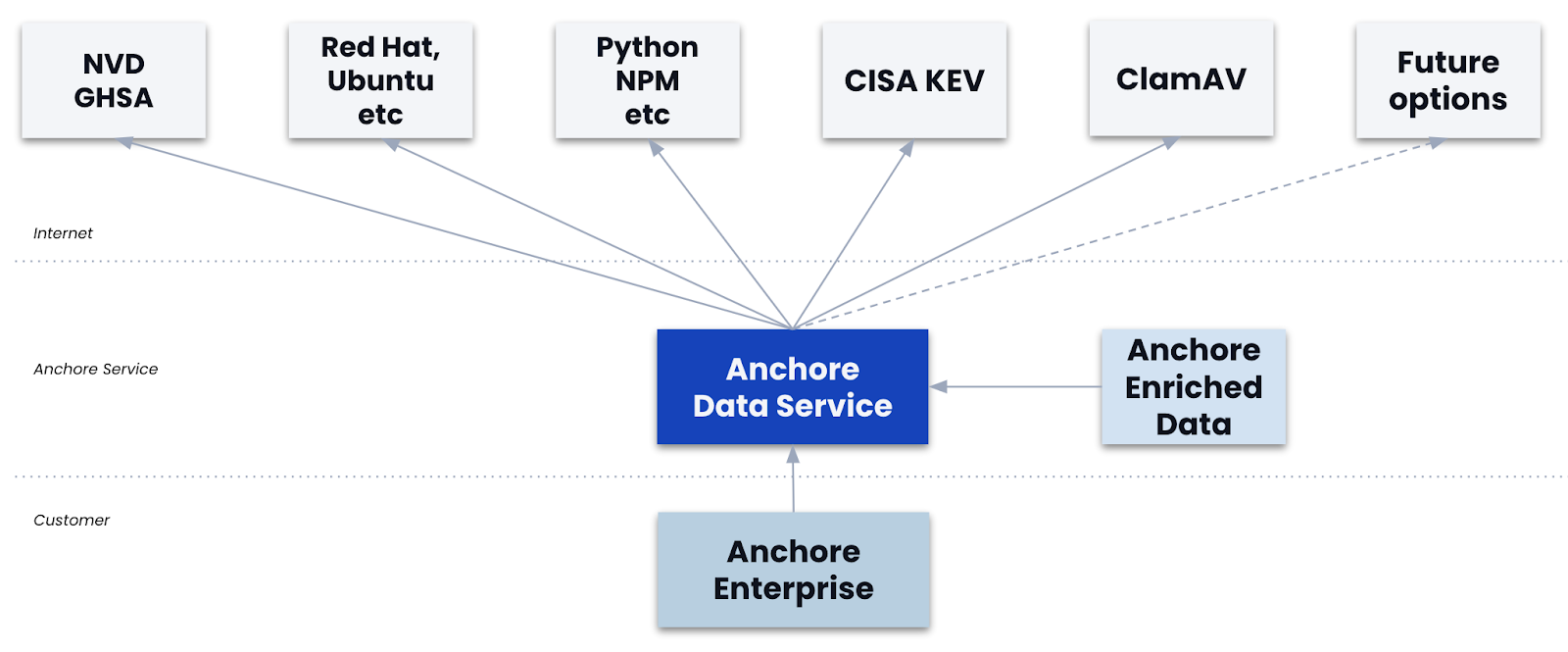

Anchore provides tooling and a service that gives unparalleled insight into the contents of your containers, whether you are building your own container images or using images from third parties. Using Anchore’s tools an organization can gain deep insight into the contents of their containers and define policies that are used to validate the contents of those containers before they are deployed. Once deployed, Anchore will be able to provide proactive notification if a container that was previously certified based on your organization’s policies moves out of compliance - for example, if a security vulnerability is found in a package you have deployed.

So far container scanning tools have concentrated on the operating system packages, inspecting the RPM or dpkg databases and reporting on the versions of packages installed and correlating that with known CVEs in these packages. However, the operating system packages are just one of many components in an image which may include configuration files, non-packages files on the file system, software artifacts such as PiP, Gem, NPM and Java archives. Compliance with your standards for deployment means more than just the latest packages it means the right packages (required packages, blacklisted packages) the right software artifacts, the right configuration files, etc.

Our core engine has already been open sourced and our commercial offering will be available later this month.