If we pay attention to the news lately we hear about supply chain security and how it’s the most important topic ever and we need to start doing something right now. But the term “supply chain security” isn’t well defined. The real challenge we actually have is understanding open source. Open source is in everything now. There is no supply chain problem, there is an understanding open source problem.

Log4Shell was our Keyser Söze moment

There’s a scene in the movie “The Usual Suspects”, where the detective realizes everything he was just told has been a lie. His entire world changed in an instant. It was a plot twist, not even the audience saw coming. Humans love plot twists and surprises in our stories, but not in real life. Log4Shell was a plot twist but in real life. It was not a fun time.

Open source didn’t take over the world overnight. It took decades. It was a silent takeover that only the developers knew about. Until Log4Shell. When Log4Shell happened everyone started looking for Log4j and they found it, everywhere they looked. But while finding Log4j, we also found a lot more open source. And I mean A LOT more. Open source was in everything, both the software acquired from other vendors and the software built in house. Everything from what’s running on our phones to what’s running the toaster. It’s all full of open source software.

Now that we know open source is everywhere, we should start to ask what open source really is. It’s not what we’ve been told. There’s often talk of “the community”, but there is no community. Open source is a vast collection of independent projects. Some of these projects are worked on by Fortune 100 companies, some by scrappy startups, and some are just a person in their basement who only can work on their project from 9:15pm to 10:05pm every other Wednesday. And open source is big. We can steal a quote from Douglas Adams’ Hitchhiker’s Guide to the Galaxy to properly capture the magnitude of open source:

“Space Open source … is big. Really big. You just won't believe how vastly hugely mind-bogglingly big it is. I mean, you may think it's a long way down the road to the chemist, but that's just peanuts to space open source.”

The challenge for something like open source isn’t just claiming it’s big. We all know it’s big. The challenge is showing how mind-bogglingly big it is. Imagine the biggest thing we can, open source is bigger.

Let’s do some homework.

The size of NPM

For the rest of this post we will focus on NPM, the Node Package Manager. NPM is how we would install dependencies for our Node.js applications. The reason this data was picked is it’s very easy to work with, it has good public data, and it’s the largest package ecosystem in the world today.

It should be said, NPM isn’t special in the context of the below data, if we compare these graphs to Python’s PyPI for example, we see very similar shapes, just not as large. In the future we may explore other packaging ecosystems, but fundamentally it’s going to look a lot like this. All of this data was generated using the scripts stored in GitHub, the repo is aptly named npm-analysis.

Let’s start with the sheer number of NPM package releases over time. It’s a very impressive and beautiful graph.

This is an incredible number of packages. At the time of capturing data, there were 32,600,904 packages. There are of course far more now, just look at the growth. By packages, we mean every version of every package released. There are about 2.3 million unique packages, but when we take those packages times all the released versions, we end up with over 32 million.

It’s hard to imagine how big this really is. There was a proposal recently that suggested we could try to conduct a security review on 10,000 open source projects per year. This is already a number that would need thousands of people to accomplish. But even at 10,000 projects per year, it would take more than 3,000 years to get through just npm at its current size. Ignoring the fact that we’ve been adding more than 1 million packages per year, so doing some math … we will be done … never, the answer is never.

The people

As humans, we love to start creating reasons for this sort of growth. Maybe it’s all malicious packages, or spammers using NPM to sell vitamins. “It’s probably big projects publishing lots of little packages”, or “have you ever seen the amount of stuff in the React framework”? It turns out almost all of NPM is single maintainer projects. The graph below shows the number of maintainers for a given project. We see there are more than 18 million releases that list a single maintainer in their package.json file. That’s over half of all NPM releases ever having just one person maintaining them.

This graph shows a ridiculous amount of NPM is one person, or a small team. If we look at the graph on a logarithmic scale we can see what the larger projects look like, the linear graph is sort of useless because of the sheer number of one person projects.

These graphs contain duplicate entries when it comes to maintainers. There are many maintainers who have more than one project, it’s quite common in fact. If we filter the graph by the number of unique maintainers, we see this chart.

It’s a lot less maintainers, but we see the data is still dominated by single maintainer projects. In this data set we see 727,986 unique NPM maintainers. This is an amazing number of developers. This a true testament to the power and reach of open source.

New packages

Now that we see there are a lot of people doing an enormous amount of work. Let’s talk about how things are growing. We mentioned earlier that more than one million packages and versions are being added per year.

If this continues we’re going to be adding more than one million new packages per month soon.

Now, it should be noted this graph isn’t new packages, it’s new releases, so if an existing project releases five updates, it shows up in this graph all five times.

If we only look at brand new packages being added, we get the below graph. A moving average was used here because this graph is a bit jumpy otherwise. New projects don’t get added very consistently.

This shows us we’re adding less than 500,000 new projects per year, which is way better than one million! But still a lot more than 10,000.

The downloads

We unfortunately don’t have an impressive graph of downloads to show. The most npm data we can get is for one year of download statistics and it’s a single number, it’s not spread out by date.

In the last year, there were 130,046,251,733,027 NPM downloads. That feels like a fake number, 15 digits. That’s 130 TRILLION downloads. Now, that’s not spread out very evenly. The median downloads of a package are only 217. The bottom 5% are 71 downloads, and the top 5% are more than 16,000 downloads. It’s pretty clear the number of downloads are very uneven. The most popular projects are getting most of the downloads.

Here is a graph of the top 100 projects by downloads. It follows a very common power distribution curve.

We probably can’t imagine what this download data over all time must look like. It’s almost certainly even more mind boggling than the current data set.

Most of these don’t REALLY matter

Nobody would argue if someone said that the vast majority of NPM packages will never see widespread use. Using the download data we can show 95% of NPM packages aren’t widely used. But the sheer scale is what’s important. 5% of NPM is still more than 100,000 unique packages. That’s a massive number, even at our 10,000 packages a year review, that’s more than ten years of work and this is just NPM.

If we filter our number of maintainers graph to only include the top 5% of downloaded packages, it basically looks the same, just with smaller numbers

Every way we look at this data, these trends seem to hold.

Now that we know how incredibly huge this all really is, we can start to talk about this supposed supply chain and what comes next.

What we can actually do about this

First, don’t panic. Then the most important thing we can do is to understand the problem. Open source is already too big to manage and growing faster than we can keep up. It is important to have realistic expectations. Before now many of us didn’t know how huge NPM was. And that’s just one ecosystem. There is a lot more open source out there in the wild.

There’s another quote from Douglas Adams’ Hitchhiker’s Guide to the Galaxy that seems appropriate right now:

‘“I thought,” he said, “that if the world was going to end we were meant to lie down or put a paper bag over our head or something.”

“If you like, yes,” said Ford.

“Will that help?” asked the barman.

“No,” said Ford and gave him a friendly smile.”’

Open source isn’t a force we command, it is a resource for us to use. Open source also isn’t one thing, it’s a collection of individual projects. Open source is more like a natural resource. A recent report from the Atlantic Council titled Avoiding the success trap: Toward policy for open-source software as infrastructure compares open source to water. It’s an apt analogy on many levels, especially when we realize most of the surface of the planet is covered in water.

The first step to fixing a problem is understanding it. It’s hard to wrap our heads around just how huge open source is, humans are bad at exponential growth. We can’t have an honest conversation about the challenges of using open source without first understanding how big and fast it really is. The intent of this article isn’t to suggest open source is broken, or bad, or should be avoided. It’s to set the stage to understand what our challenge looks like.

The importance and overall size of open source will only grow as we move forward. Trying to use the ideas of the past can’t work at this scale. We need new tools, ideas, and processes to face our new software challenges. There are many people, companies, and organizations working on this but not always with a grasp of the true scale of open source. We can and should help existing projects, but the easiest first step is to understand how big our open source use is. Do we know what open source we’re using?

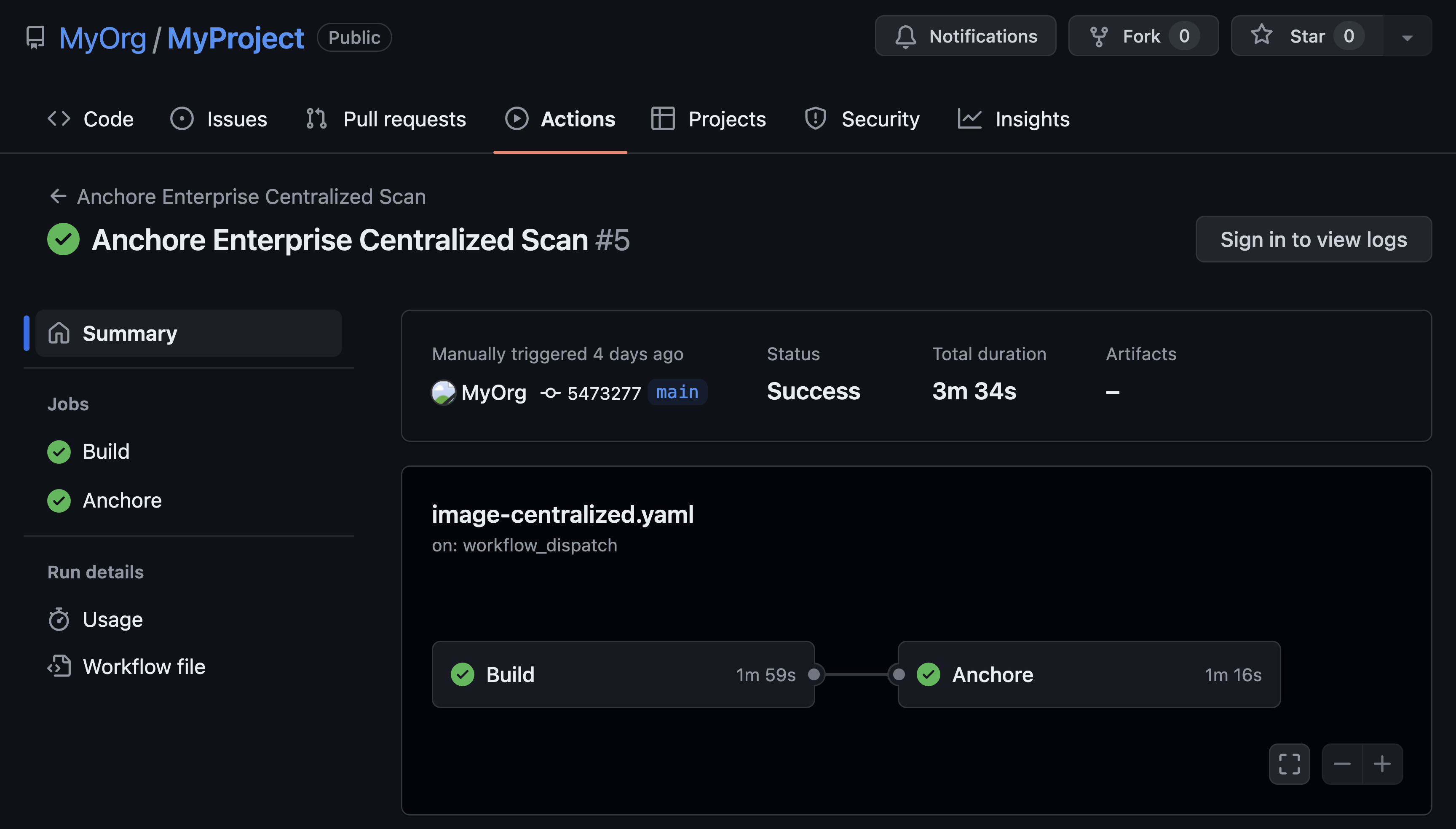

Anchore is working on this problem every day. Come help with our open source projects Syft and Grype, or have a chat with us about our enterprise solution.

Josh Bressers

Josh Bressers is vice president of security at Anchore where he guides security feature development for the company’s commercial and open source solutions. He serves on the Open Source Security Foundation technical advisory council and is a co-founder of the Global Security Database project, which is a Cloud Security Alliance working group that is defining the future of security vulnerability identifiers.