The Security Technical Implementation Guide (STIG) is a Department of Defense (DoD) technical guidance standard that captures the cybersecurity requirements for a specific product, such as a cloud application going into production to support the warfighter. System integrators (SIs), government contractors, and independent software vendors know the STIG process as a well-governed process that all of their technology products must pass. The Defense Information Systems Agency (DISA) released the Container Platform Security Requirements Guide (SRG) in December 2020 to direct how software containers go through the STIG process.

STIGs are notorious for their complexity and the hurdle that STIG compliance poses for technology project success in the DoD. Here are some tips to help your team prepare for your first STIG or to fine-tune your existing internal STIG processes.

4 Ways to Prepare for the STIG Process for Containers

Here are four ways to prepare your teams for containers entering the STIG process:

1. Provide your Team with Container and STIG Cross-Training

DevSecOps and containers, in particular, are still gaining ground in DoD programs. You may very well find your team in a situation where your cybersecurity/STIG experts may not have much container experience. Likewise, your programmers and solution architects may not have much STIG experience. Such a situation calls for some manner of formal or informal cross-training for your team on at least the basics of containers and STIGs.

Look for ways to provide your cybersecurity specialists involved in the STIG process with training about containers if necessary. There are several commercial and free training options available. Check with your corporate training department to see what resources they might have available such as seats for online training vendors like A Cloud Guru and Cloud Academy.

There’s a lot of out-of-date and conflicting information about the STIG process on the web today. System integrators and government contractors need to build STIG expertise across their DoD project teams to cut through such noise.

Including STIG expertise as an essential part of your cybersecurity team is the first step. While contract requirements dictate this proficiency, it only helps if your organization can build a “bench” of STIG experts.

Here are three tips for building up your STIG talent base:

- Make STIG experience a “plus” or “bonus” in your cybersecurity job requirements for roles, even if they may not be directly billable to projects with STIG work (at least in the beginning)

- Develop internal training around STIG practices led by your internal experts and make it part of employee onboarding and DoD project kickoffs

- Create a “reach back” channel from your project teams to get STIG expertise from other parts of your company, such as corporate and other project teams with STIG expertise, to get support for any issues and challenges with the STIG process

Depending on the size of your company, clearance requirements of the project, and other situational factors, the temptation might be there to bring in outside contractors to shore up your STIG expertise internally. For example, the Container Platform Security Resource Guide (SRG) is still new. It makes sense to bring in an outside contractor with some experience managing containers through the STIG process. If you go this route, prioritize the knowledge transfer from the contractor to your internal team. Otherwise, their container and STIG knowledge walk out the door at the end of the contract term.

2. Validate your STIG Source Materials

When researching the latest STIG requirements, you need to validate the source materials. There are many vendors and educational sites that publish STIG content. Some of that content is outdated and incomplete. It’s always best to go straight to the source. DISA provides authoritative and up-to-date STIG content online that you should consider as your single source of truth on the STIG process for containers.

3. Make the STIG Viewer part of your Approved Desktop

Working on DoD and other public sector projects requires secure environments for developers, solution architects, cybersecurity specialists, and other team members. The STIG Viewer should become a part of your DoD project team’s secure desktop environment. Save the extra step of your DoD security teams putting in a service desk ticket to request the STIG Viewer installation.

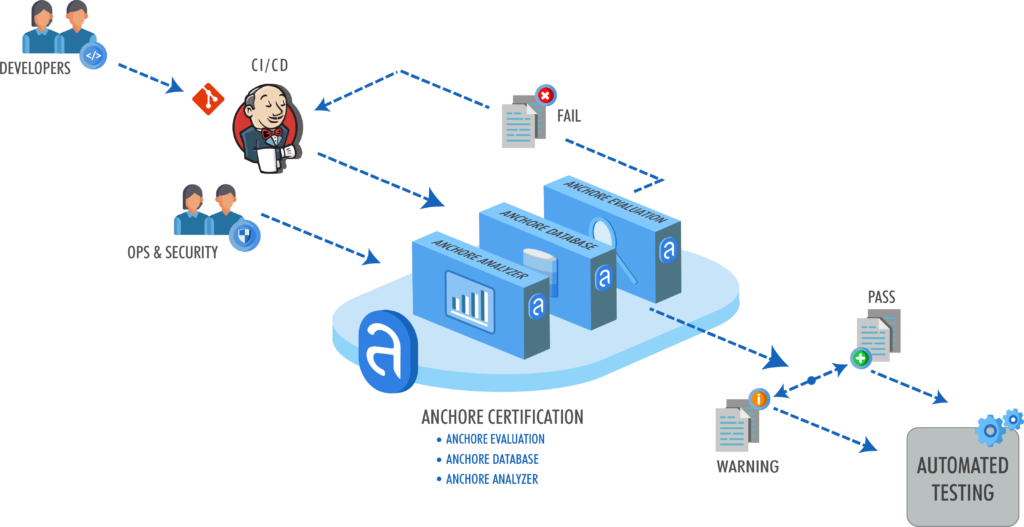

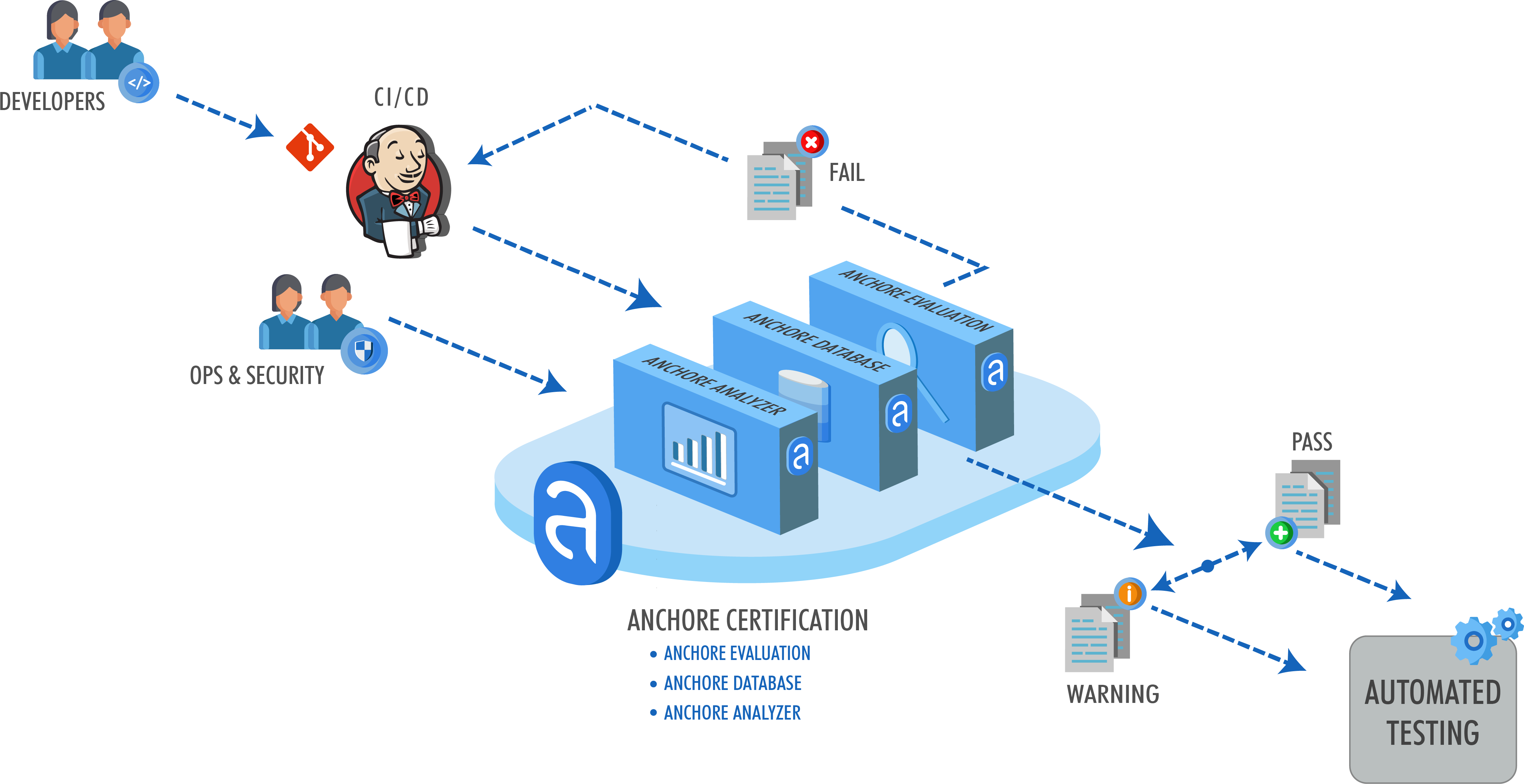

4. Look for Tools that Automate time-intensive Steps in the STIG process

The STIG process is time-intensive, primarily documenting policy controls. Look for tools that’ll help you automate compliance checks before you proceed into an audit of your STIG controls. The right tool can save you from audit surprises and rework that’ll slow down your application going live.

Parting Thought

The STIG process for containers is still very new to DoD programs. Being proactive and preparing your teams upfront in tandem with ongoing collaboration are the keys to navigating the STIG process for containers.

Learn more about putting your containers through the STIG process in our new white paper entitled Navigating STIG Compliance for Containers!