In the rapidly evolving landscape of national defense and cybersecurity, the concept of a Department of Defense (DoD) software factory has emerged as a cornerstone of innovation and security. These software factories represent an integration of the principles and practices found within the DevSecOps movement, tailored to meet the unique security requirements of the DoD and Defense Industrial Base (DIB).

By fostering an environment that emphasizes continuous monitoring, automation, and cyber resilience, DoD Software Factories are at the forefront of the United States Government’s push towards modernizing its software and cybersecurity capabilities. This initiative not only aims to enhance the velocity of software development but also ensures that these advancements are achieved without compromising on security, even against the backdrop of an increasingly sophisticated threat landscape.

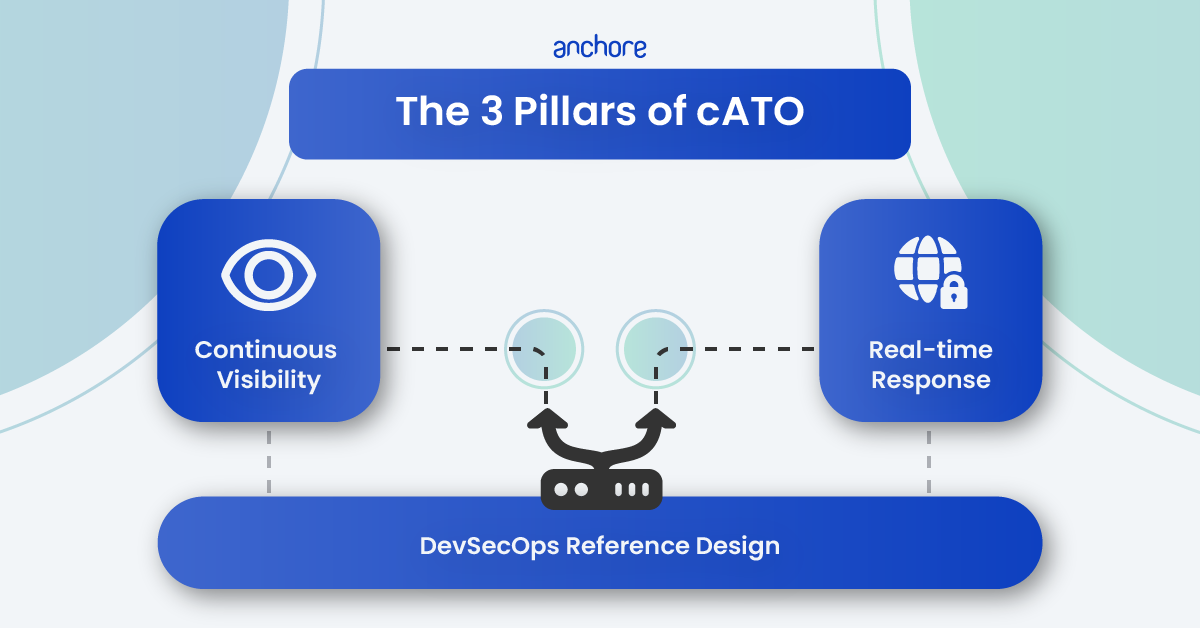

Building and running a DoD software factory is so central to the future of software development that “Establish a Software Factory” is the one of the explicitly named plays from the DoD DevSecOps Playbook. On top of that, the compliance capstone of the authorization to operate (ATO) or its DevSecOps infused cousin the continuous ATO (cATO) effectively require a software factory in order to meet the requirements of the standard. In this blog post, we’ll break down the concept of a DoD software factory and a high-level overview of the components that make up one.

What is a DoD software factory?

A Department of Defense (DoD) Software Factory is a software development pipeline that embodies the principles and tools of the larger DevSecOps movement with a few choice modifications that conform to the extremely high threat profile of the DoD and DIB. It is part of the larger software and cybersecurity modernization trend that has been a central focus for the United States Government in the last two decades.

The goal of a DoD Software Factory is aimed at creating an ecosystem that enables continuous delivery of secure software that meet the needs of end-users while ensuring cyber resilience (a DoD catchphrase that emphasizes the transition from point-in-time security compliance to continuous security compliance). In other words, the goal is to leverage automation of software security tasks in order to fulfill the promise of the DevSecOps movement to increase the velocity of software development.

What is an example of a DoD software factory?

Platform One is the canonical example of a DoD software factory. Run by the US Air Force, it offers both a comprehensive portfolio of software development tools and services. It has come to prominence due to its hosted services like Repo One for source code hosting and collaborative development, Big Bang for a end-to-end DevSecOps CI/CD platform and the Iron Bank for centralized container storage (i.e., container registry). These services have led the way to demonstrating that the principles of DevSecOps can be integrated into mission critical systems while still preserving the highest levels of security to protect the most classified information.

If you’re interested to learn more about how Platform One has unlocked the productivity bonus of DevSecOps while still maintaining DoD levels of security, watch our webinar with Camdon Cady, Chief of Operations and Chief Technology Officer of Platform One.

Who does it apply to?

Federal Service Integrators (FSI)

Any organization that works with the DoD as a federal service integrator will want to be intimately familiar with DoD software factories as they will either have to build on top of existing software factories or, if the mission/program wants to have full control over their software factory, be able to build their own for the agency.

Department of Defense (DoD) Mission

Any Department of Defense (DoD) mission will need to be well-versed on DoD software factories as all of their software and systems will be required to run on a software factory as well as both reach and maintain a cATO.

What are the components of a DoD Software Factory?

A DoD software factory is composed of both high-level principles and specific technologies that meet these principles. Below are a list of some of the most significant principles of a DoD software factory:

Principles of DevSecOps embedded into a DoD software factory

- Breakdown organizational silos

- This principle is borrowed directly from the DevSecOps movement, specifically the DoD aims to integrate software development, test, deployment, security and operations into a single culture with the organization.

- Open source and reusable code

- Composable software building blocks is another principle of the DevSecOps that increases productivity and reduces security implementation errors from developers writing secure software packages that they are not experts in.

- Immutable Infrastructure-as-Code (IaC)

- This principle focuses on treating the infrastructure that software runs on as ephemeral and managed via configuration rather than manual systems operations. Enabled by cloud computing (i.e., hardware virtualization) this principle increases the security of the underlying infrastructure through templated secure-by-design defaults and reliability of software as all infrastructure has to be designed to fail at any moment.

- Microservices architecture (via containers)

- Microservices are a design pattern that creates smaller software services that can be built and scale independently of each other. This principle allows for less complex software that only performs a limited set of behavior.

- Shift Left

- Shift left is the DevSecOps principle that re-frames when and how security testing is done in the software development lifecycle. The goal is to begin security testing while software is being written and tested rather than after the software is “complete”. This prevents insecure practices from cascading into significant issues right as software is ready to be deployed.

- Continuous improvement through key capabilities

- The principle of continuous improvement is a primary characteristic of the DevSecOps ethos but the specific key capabilities that are defined in the DoD DevSecOps playbook are what make this unique to the DoD.

- Define a DevSecOps pipeline

- A DevSecOps pipeline is the system that utilizes all of the preceding principles in order to create the continuously improving security outcomes that is the goal of the DoD software factory program.

- Cyber resilience

- Cyber resiliency is the goal of a DoD software factory, is it defined as, “the ability to anticipate, withstand, recover from, and adapt to adverse conditions, stresses, attacks, or compromises on the systems that include cyber resources.”

Common tools and systems of a DoD software factory

- Code Repository (e.g., Repo One)

- Where software source code is stored, managed and collaborated on.

- CI/CD Build Pipeline (e.g., Big Bang)

- The system that automates the creation of software build artifacts, tests the software and packages the software for deployment.

- Artifact Repository (e.g., Iron Bank)

- The storage system for software components used in development and the finished software artifacts that are produced from the build process.

- Runtime Orchestrator and Platform (e.g., Big Bang)

- The deployment system that hosts the software artifacts pulled from the registry and keeps the software running so that users can access it.

How do I meet the security requirements for a DoD Software Factory? (Best Practices)

Use a pre-existing software factory

The benefit of using a pre-existing DoD software factory is the same as using a public cloud provider; someone else manages the infrastructure and systems. What you lose is the ability to highly customize your infrastructure to your specific needs. What you gain is the simplicity of only having to write software and allow others with specialized skill sets to deal with the work of building and maintaining the software infrastructure. When you are a car manufacturer, you don’t also want to be a civil engineering firm that designs roads.

To view existing DoD software factories, visit the Software Factory Ecosystem Coalition website.

Roll your own by following DoD best practices

If you need the flexibility and customization of managing your own software factory then we’d recommend following the DoD Enterprise DevSecOps Reference Design as the base framework. There are a few software supply chain security recommendations that we would make in order to ensure that things go smoothly during the authorization to operate (ATO) process:

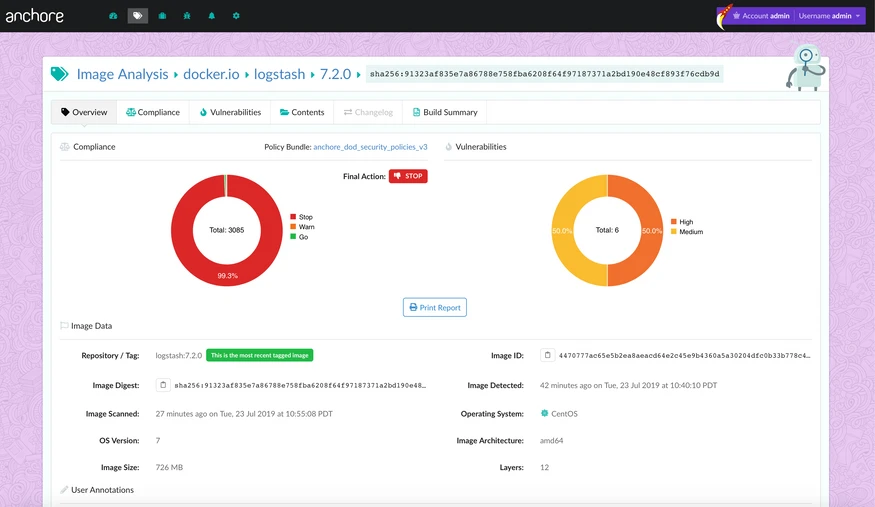

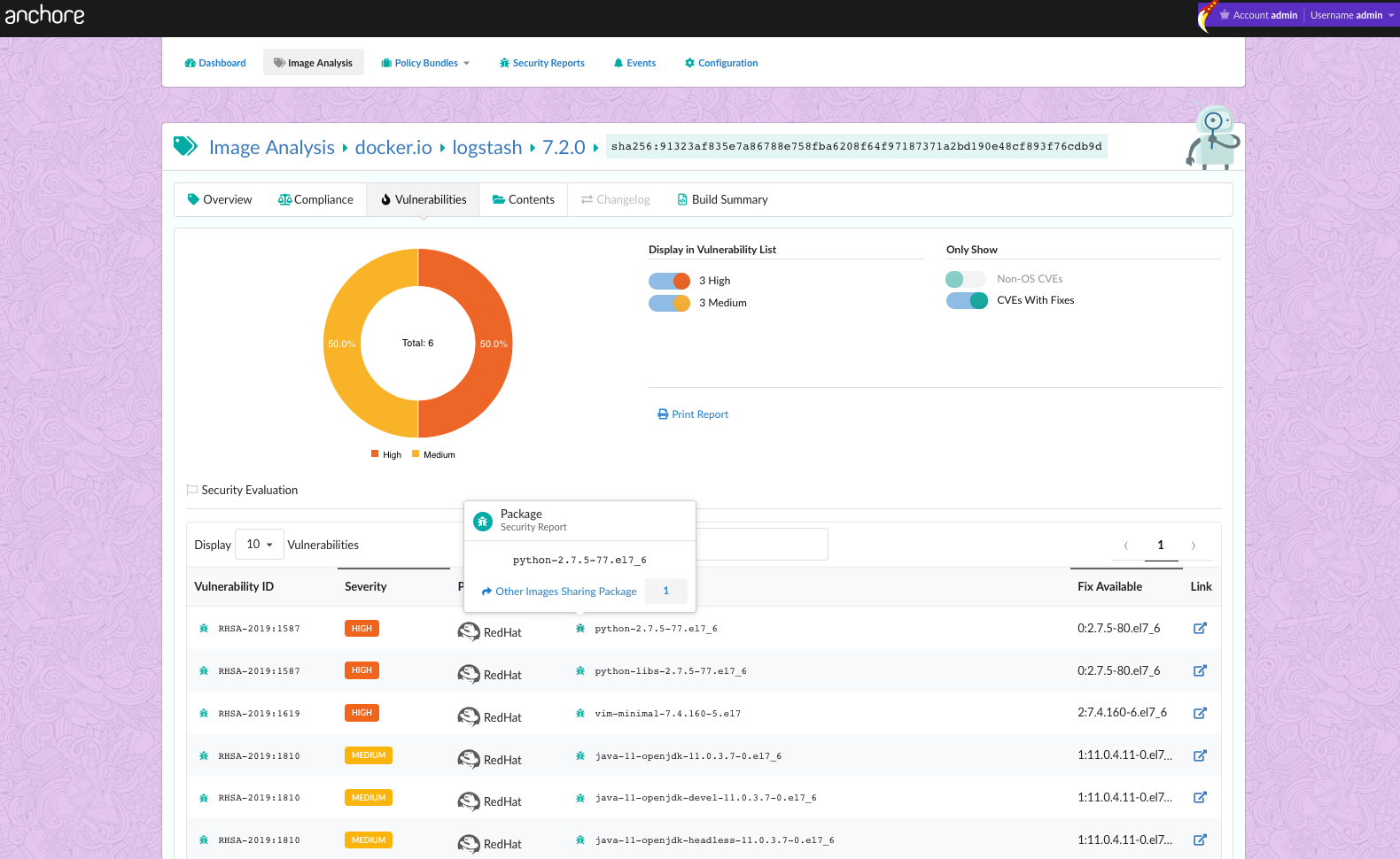

- Continuous vulnerability scanning across all stages of CI/CD pipeline

- Use a cloud-native vulnerability scanner that can be directly integrated into your CI/CD pipeline and called automatically during each phase of the SDLC

- Automated policy checks to enforce requirements and achieve ATO

- Use a cloud-native policy engine in tandem with your vulnerability scanner in order to automate the reporting and blocking of software that is a security threat and a compliance risk

- Remediation feedback

- Use a cloud-native policy engine that can provide automated remediation feedback to developers in order to maintain a high velocity of software development

- Compliance (Trust but Verify)

- Use a reporting system that can be directly integrated with your CI/CD pipeline to create and collect the compliance artifacts that can prove compliance with DoD frameworks (e.g., CMMC and cATO)

- Air-gapped system

- Utilize a cloud-native software supply chain security platform that can be deployed into an air-gapped environment in order to maintain the most strict security for classified missions

Is a software factory required in order to achieve cATO?

Technically, no. Effectively, yes. A cATO requires that your software is deployed on an Approved DoD Enterprise DevSecOps Reference Design not a software factory specifically. If you build your own DevSecOps platform that meets the criteria for the reference design then you have effectively rolled your own software factory.

How Anchore can help

The easiest and most effective method for achieving the security guarantees that a software factory is required to meet for its software supply chain are by using:

- An SBOM generation tool that integrates directly into your software development pipeline

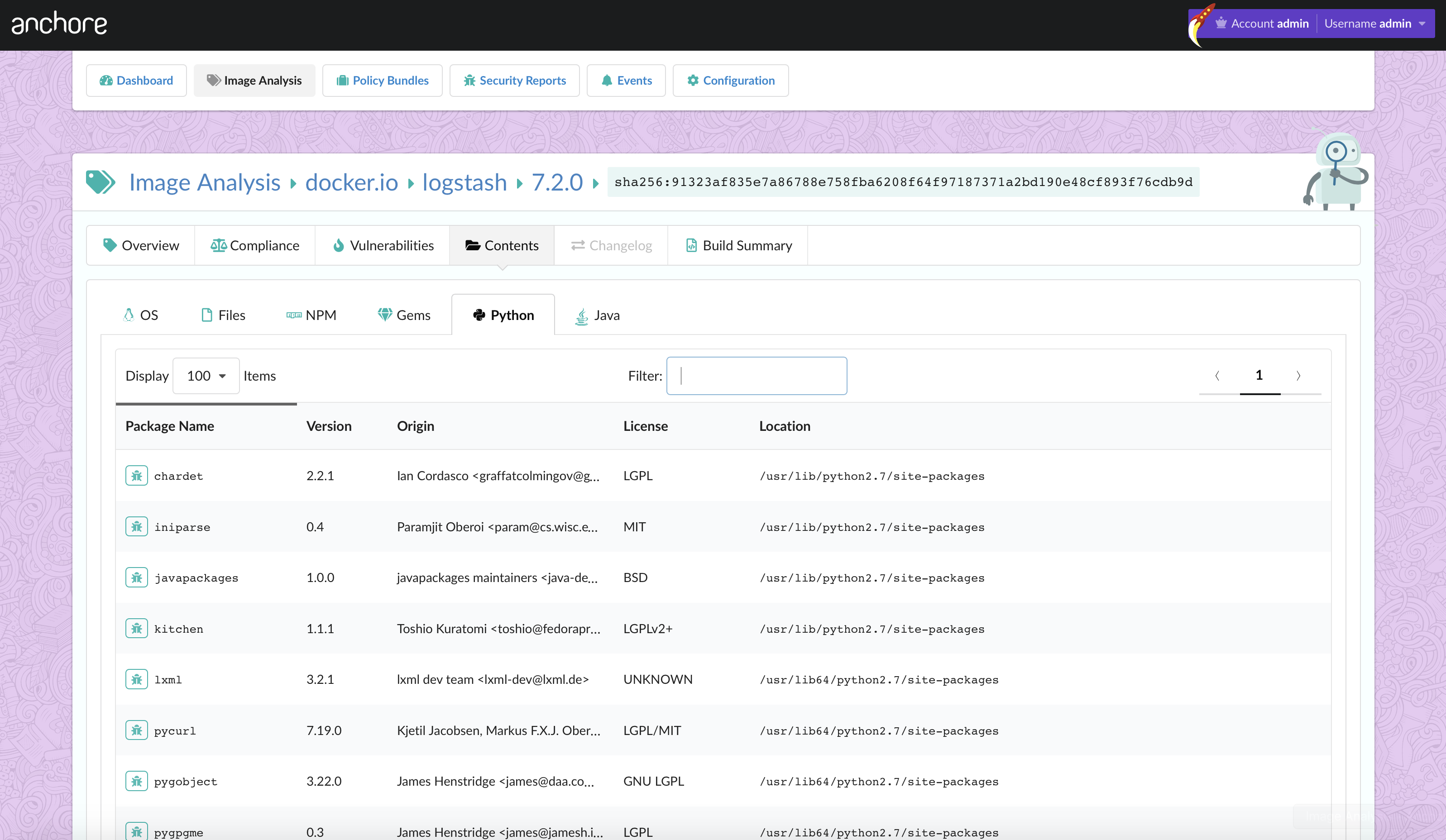

- A container vulnerability scanner that integrates directly into your software development pipeline

- A policy engine that integrates directly into your software development pipeline

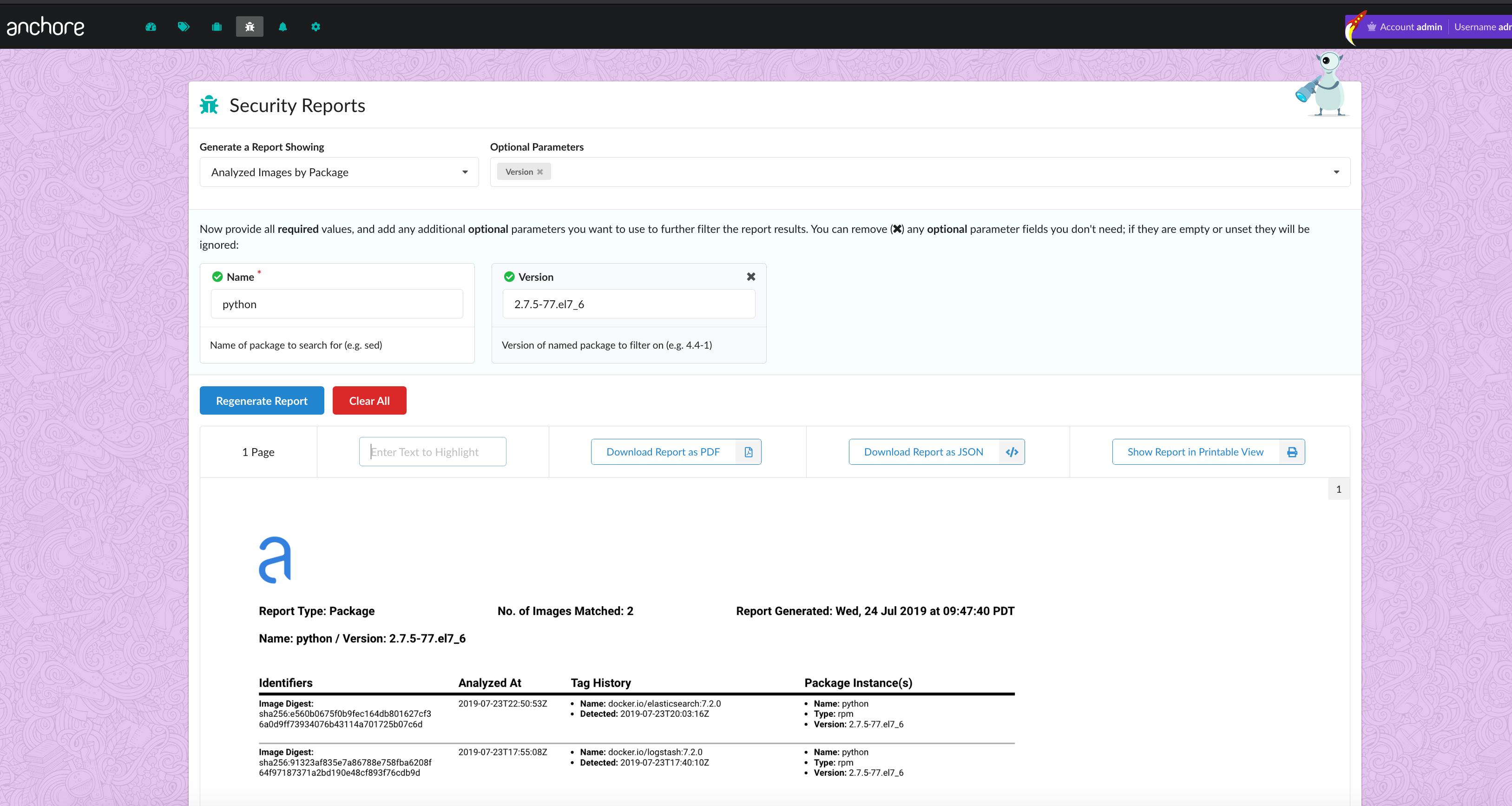

- A centralized database to store all of your software supply chain security logs

- A query engine that can continuously monitor your software supply chain and automate the creation of compliance artifacts

These are the primary components of both Anchore Enterprise and Anchore Federal cloud native, SBOM-powered software composition analysis (SCA) platforms that provide an end-to-end software supply chain security to holistically protect your DevSecOps pipeline and automate compliance. This approach has been validated by the DoD, in fact the DoD’s Container Hardening Process Guide specifically named Anchore Federal as a recommended container hardening solution.

Learn more about how Anchore fuses DevSecOps and DoD software factories.

Conclusion and Next Steps

DoD software factories can come off as intimidating at first but hopefully we have broken them down into a more digestible form. At their core they reflect the best of the DevSecOps movement with specific adaptations that are relevant to the extreme threat environment that the DoD has to operate in, as well as, the intersecting trend of the modernization of federal security compliance standards.

If you’re looking to dive deeper into all things DoD software factory, we have a white paper that lays out the 6 best practices for container images in highly secure environments. Download the white paper below.