Just as the shift from monolithic architectures to microservices fundamentally transformed infrastructure management in the 2010s…bringing agility alongside massive operational complexity…we are witnessing a similar structural shift in software transparency. The definition of “software” itself has expanded. It is no longer just lines of deterministic code; it is now an interconnected web of data, models, hardware, and services.

History is repeating itself, but with higher stakes. The same pattern of opacity that plagued open source adoption two decades ago is now playing out with AI and critical infrastructure. How are we dealing with this repetition of history? We are extending an already well-known standard; SBOMs.

In a recent deep-dive conversation, Kate Stewart, VP of Dependable Embedded Systems at The Linux Foundation and founder of SPDX, laid out the roadmap for SPDX 3.0. Her insights reveal that we are moving from simple file tracking to comprehensive system analysis.

Here is how the landscape is changing and why the “S” in SBOM is evolving from “Software” to “System.”

Learn about SBOMs, how they came to be and how they are used to enable valuable use-cases for modern software.

The evolution of SBOM use-cases

To understand where we are going, we must map the trajectory of supply chain visibility.

- The OSS License Era (2010s) was characterized by license risk. Organizations needed to know if they were accidentally shipping GPL code. Manual tracking was feasible because dependency trees were relatively shallow.

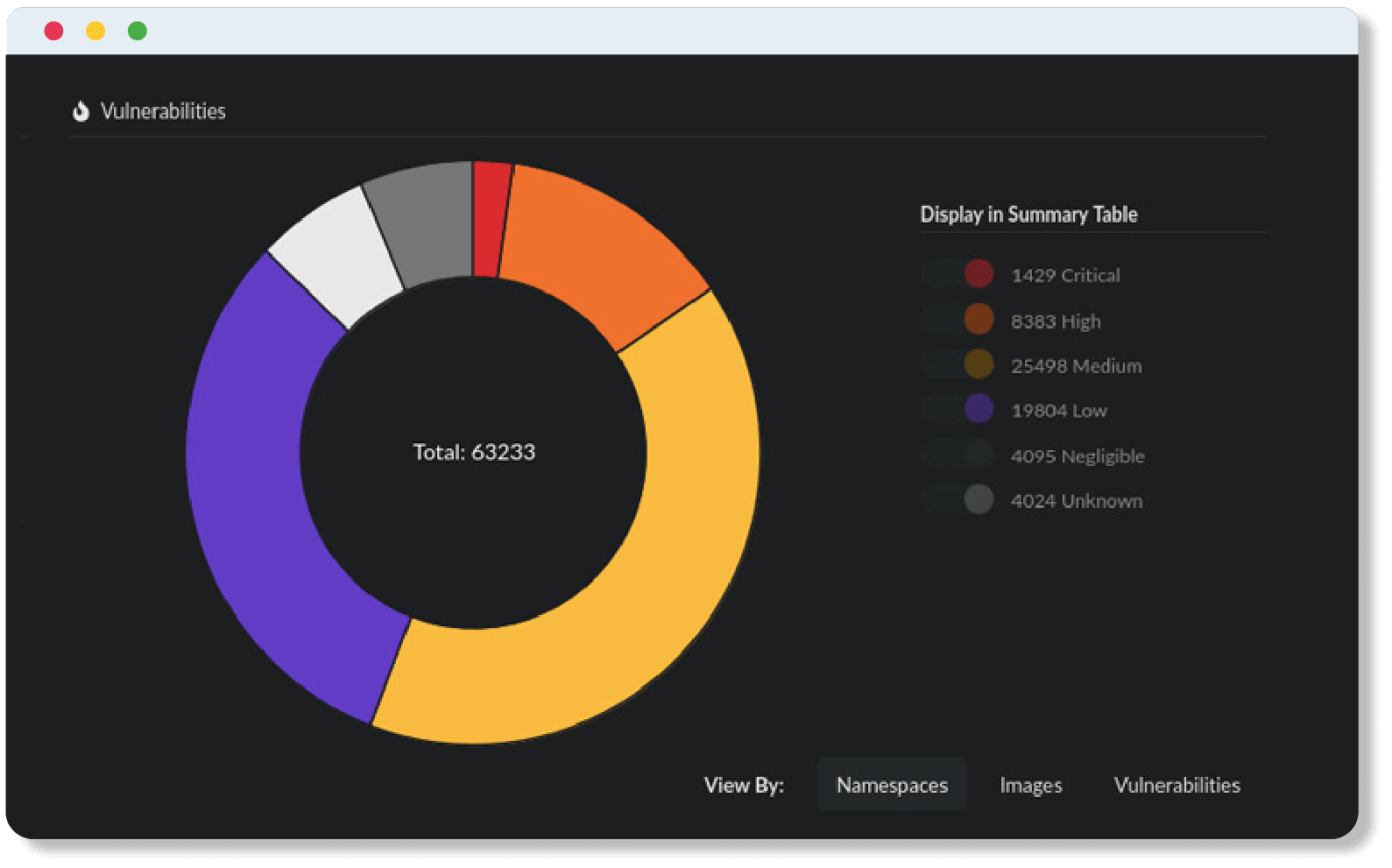

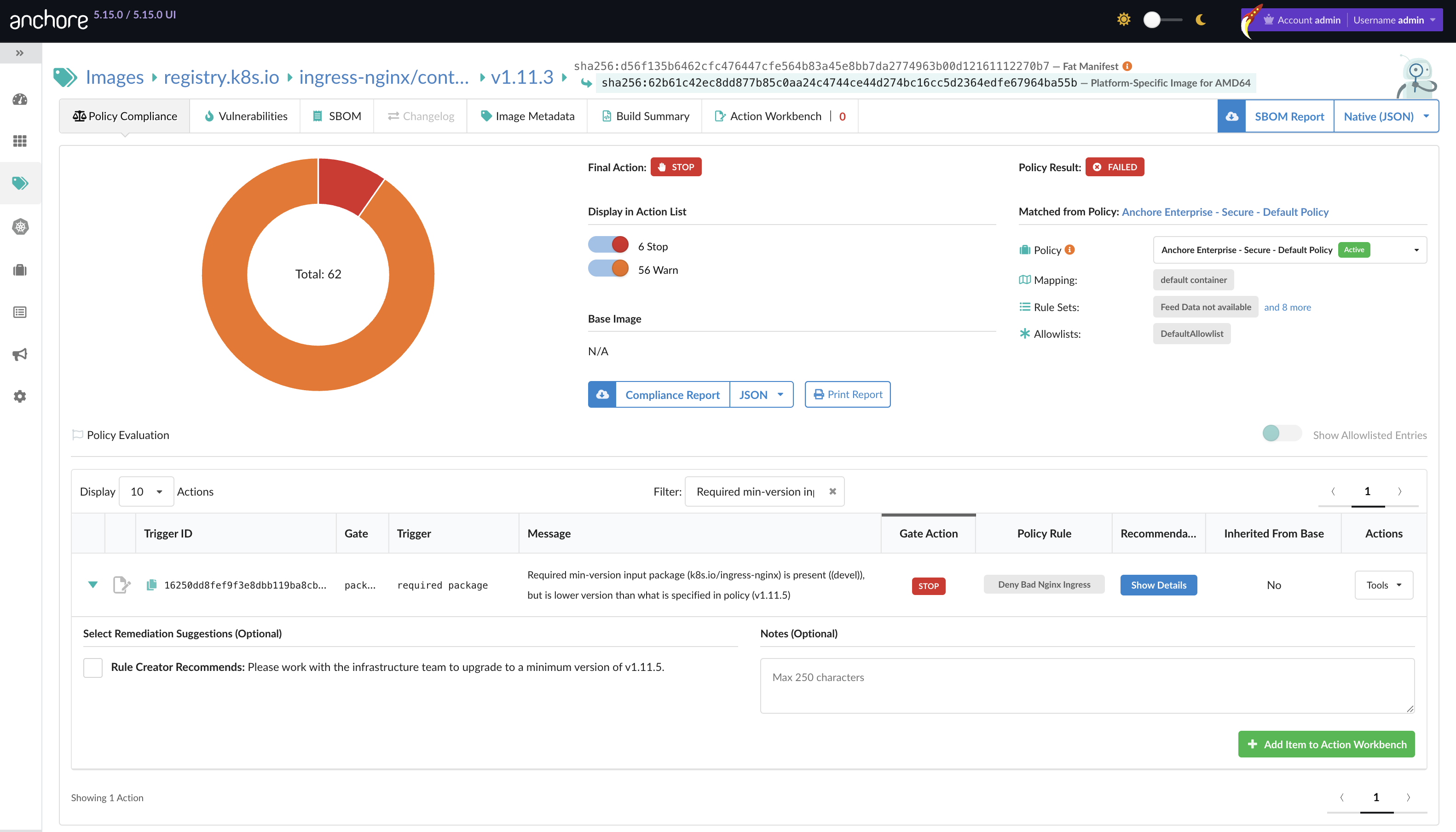

- The CVE Era (2018-2023) brought a security-first focus. Incidents like Log4j exposed the depth of transitive dependencies. The SBOM became a security artifact. But it was still largely static; a snapshot of a moment in time.

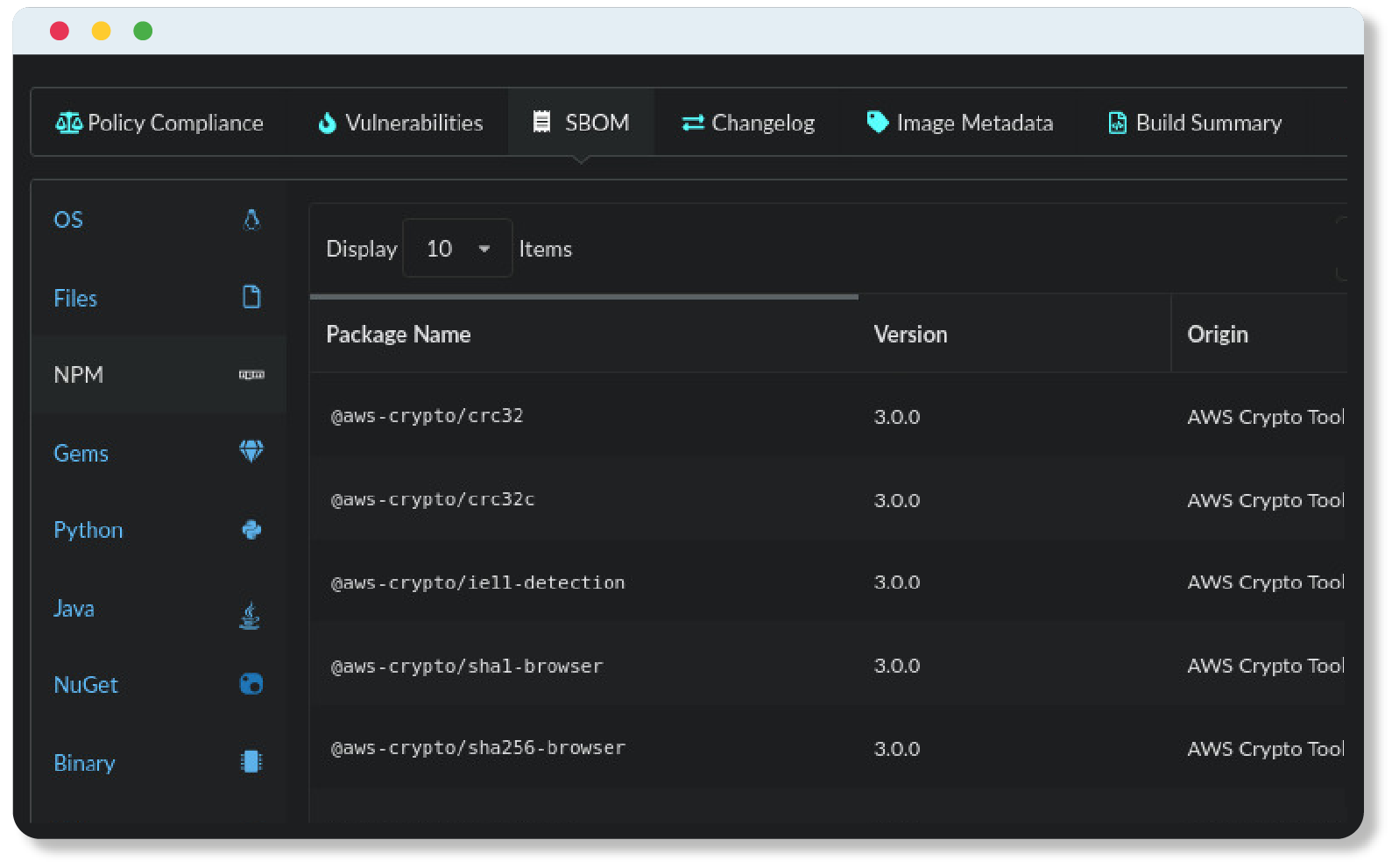

- The AI Era (Present) has emerged as LLMs and embedded systems explode in complexity. We are no longer just tracking libraries. We are tracking: training data, hardware configurations, and model weights.

This evolution brings us to the core challenges Kate Stewart highlighted.

Data is now code

AI systems are fundamentally different from traditional software components. Where conventional code follows logic paths written by humans, AI models operate on patterns learned from data. This creates a visibility crisis: if you do not know the data, you do not know the risk.

Kate Stewart framed this relationship with a perfect analogy that defines the new requirement for AI transparency:

“If you don’t have the transparency into the data sets used to train the models, you can’t build trust in the models. Source code is to build artifacts as data sets are to AI models. The data sets are really what’s biasing the behavior.”

We can no longer treat an AI model as a black box. To trust the output, we must have visibility into the input. SPDX 3.0 addresses this by introducing specific profiles for AI models and pre-training data sets, allowing organizations to track the lineage of a model just as they would track a Git commit.

Risk as the north star

Despite the complexity of AI and systems, the core principle of supply chain security remains unchanged. It is a concept that Kate Stewart has championed since the early days of SPDX, when legal teams first demanded cryptographic hashes to ensure integrity.

“Transparency is the path to minimizing risk.”

It sounds simple, but at scale, it is a complex orchestration problem. Whether it is a satellite operating system running Zephyr or a cloud-native financial application, you cannot mitigate what you cannot see. The industry is moving toward a model where transparency is not just a “nice to have” for open source compliance. Instead it is a baseline requirement for operations.

Evidence instead of remediation

One of the most expensive activities in cybersecurity is chasing false positives. Traditional vulnerability scanners operate on the potential risk of an exploit. If a package version is bad, it is vulnerable. But in complex systems, the presence of a package does not equal exploitation.

Kate Stewart noted that high-fidelity SBOMs allow for a fundamentally different approach to vulnerability management:

“If we can be authoritative by saying, ‘no, that file with the vulnerability is not in my image.’ Then you don’t have to remediate and you can prove it. Instead, you can create a VEX and say, ‘okay, I’m asserting this and I can attribute it to….’ Knowing if you are truly exposed is critical in this space, right?”

This is the shift from reactive firefighting to strategic analysis. By using VEX (Vulnerability Exploitability eXchange) documents alongside high-fidelity SBOMs, organizations can prove they are not affected. In sectors like automotive or medical devices, where patching requires expensive recertification, this prevents unnecessary suspension of sales or recalls.

The Economic Power of Regulation

What started as voluntary cybersecurity best practices is rapidly hardening into market-access requirements. The EU Cyber Resilience Act (CRA) is forcing manufacturers to take security hygiene seriously:

“The penalties for manufacturers are pretty steep [for the EU CRA]. They’re taking it pretty seriously over there. I think transparency is going to improve the practices, right? And people don’t do things unless they have to. It becomes an economic concern at this point and they want to save money, right?”

Regulation is acting as the forcing function for transparency. It is shifting the conversation from “technical debt” to “revenue risk.”

Where do we go from here?

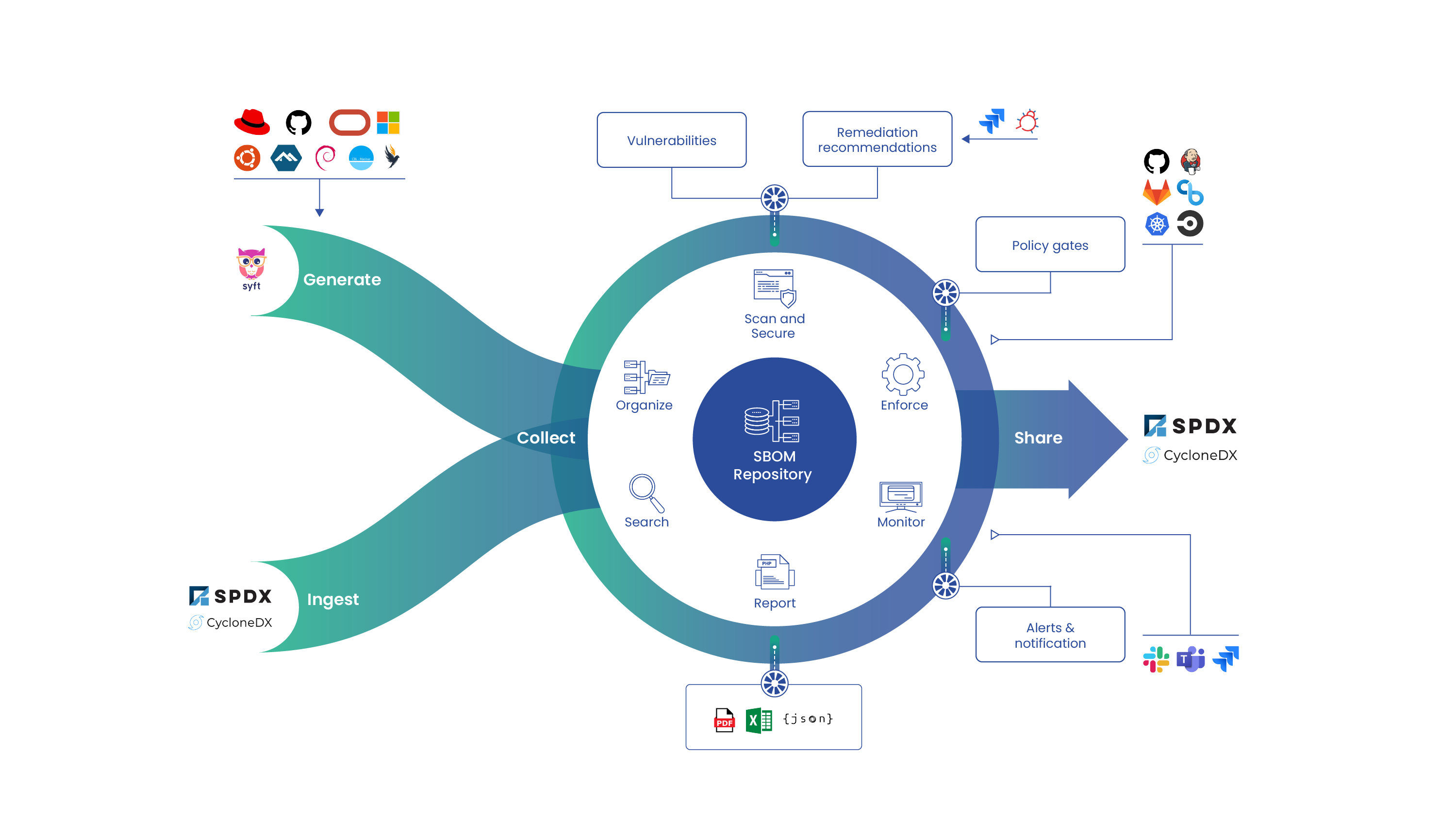

The move to System BOMs and SPDX 3.0 is inevitable, but it does not happen overnight. Organizations can follow a phased approach to get ahead of the curve.

Crawl: Establish the Baseline

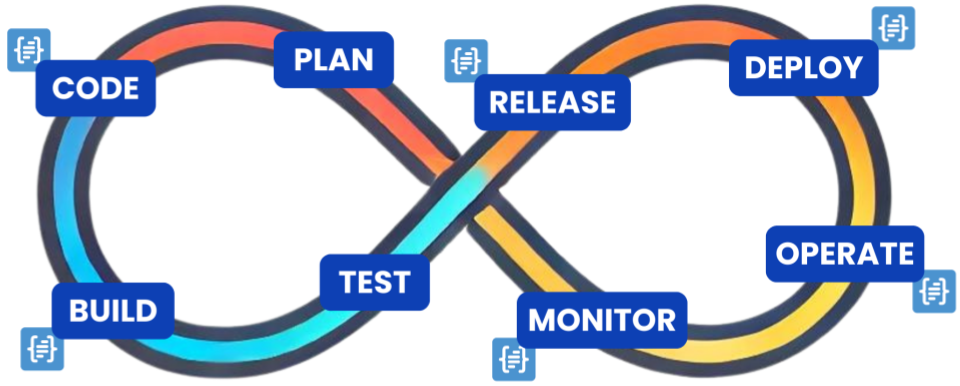

Ensure you are generating standard SBOMs for all build artifacts. Use established tools like Syft to capture the “ingredients list” of your containers and filesystems. This builds the foundational muscle memory for transparency.

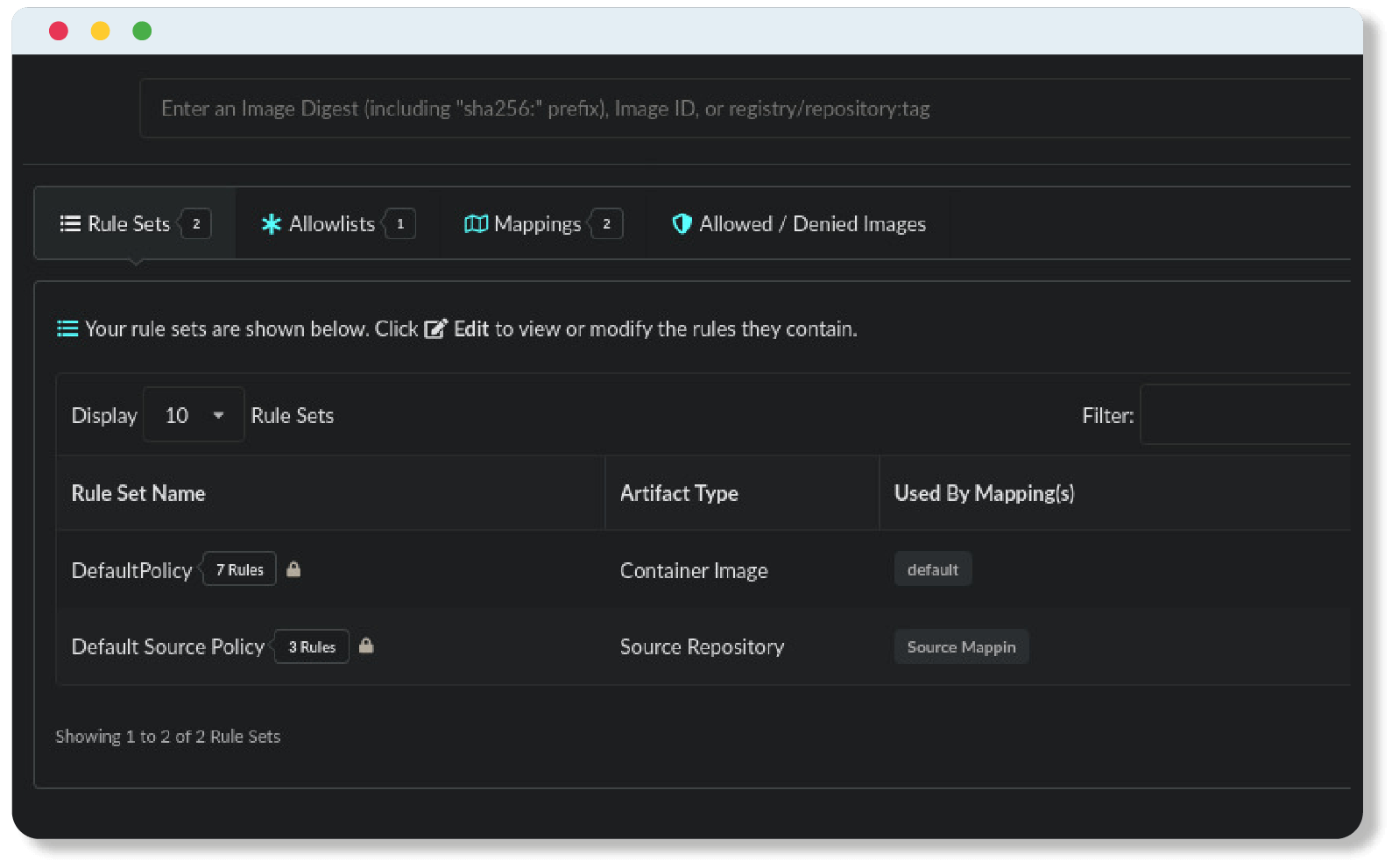

Walk: Add Context and VEX

Begin filtering the noise. Implement VEX to flag vulnerabilities that are not exploitable in your specific configuration. This reduces the burden on developer teams and shifts the focus to real risk.

Run: Adopt System Profiles

As tooling matures, begin mapping the broader system. Link your software SBOMs to the data sets used in your AI models and the hardware profiles of your deployment targets. This creates the “knowledge graph” that Kate Stewart envisions.

The window of opportunity to build these processes voluntarily is closing. As regulations like the CRA come online, system-level transparency will become the price of admission for global markets.

Learn about SBOMs, how they came to be and how they are used to enable valuable use-cases for modern software.