Wired recently published an article titled Security Researchers Warn a Widely Used Open Source Tool Poses a ‘Persistent’ Risk to the US which paints a dire picture of a popular open source Go package named easyjson. This sounds like it could be a problem if you read the article, so how much panic is appropriate for this situation? In order to ruin the big conclusion, the answer is “not much”.

So what’s the deal? Are the adversaries using open source as a trojan horse into our software? They are, without question. Remember XZ Utils or tj-actions/changed-files? Those are both well resourced attacks against important open source components. It’s clear that open source is a target for attackers. We can name two examples, it’s likely there are more.

But what about easyjson? Is that a supply chain attack? So far it doesn’t look like it. There is no evidence that using the easyjson Go library creates a risk for an organization. Could this change someday? Absolutely, but so could any other open source library. The potential risk from a Russian company controlling a popular open source library probably isn’t an important detail.

Let’s look at some examples.

Pulling all this data is a lot of work, but there are some quick things anyone can observe in a web browser. Let’s use a couple of popular NPM packages. It’s easy to find this list which is why I’m using NPM, but the example will apply to anything in GitHub

If we dig into the owners of those widely used repositories, the only one that lists a real location is React, it’s in Menlo Park, California, USA—the headquarters for Meta. Where are those other repositories located? We don’t really know. It’s also worth pointing out that all of those repositories have many contributors from all over the world. Just because a project is controlled by an organization in a country doesn’t mean all contributions are from that country.

We know easyjson is from a Russian company because they aren’t trying to hide this fact. The organization that holds the easyjson repository is Mail.ru, a Russian company—and they list their location as Russia. If they want to conduct nefarious activities against open source, this isn’t the best way to do it.

There are some lessons in this though.

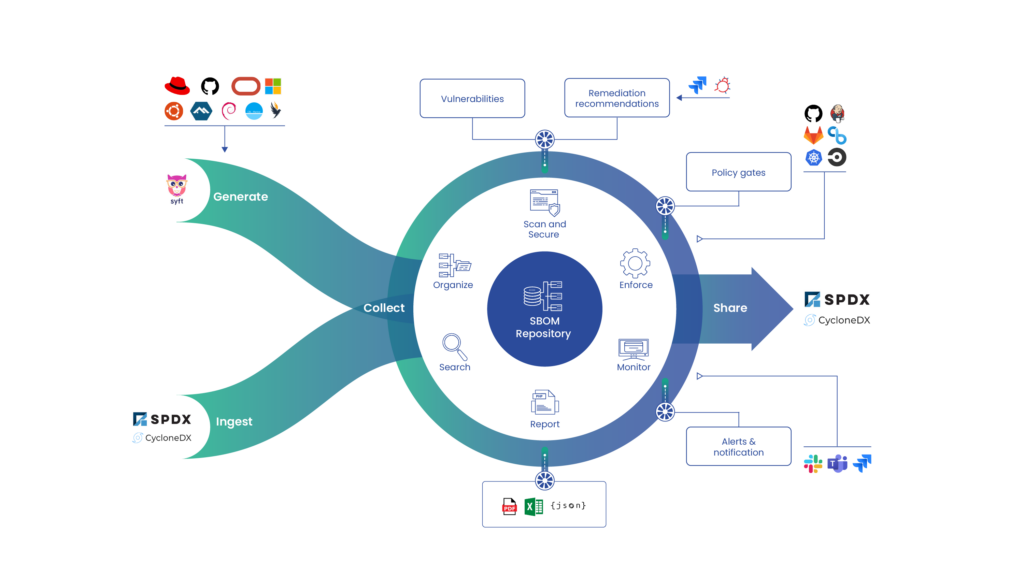

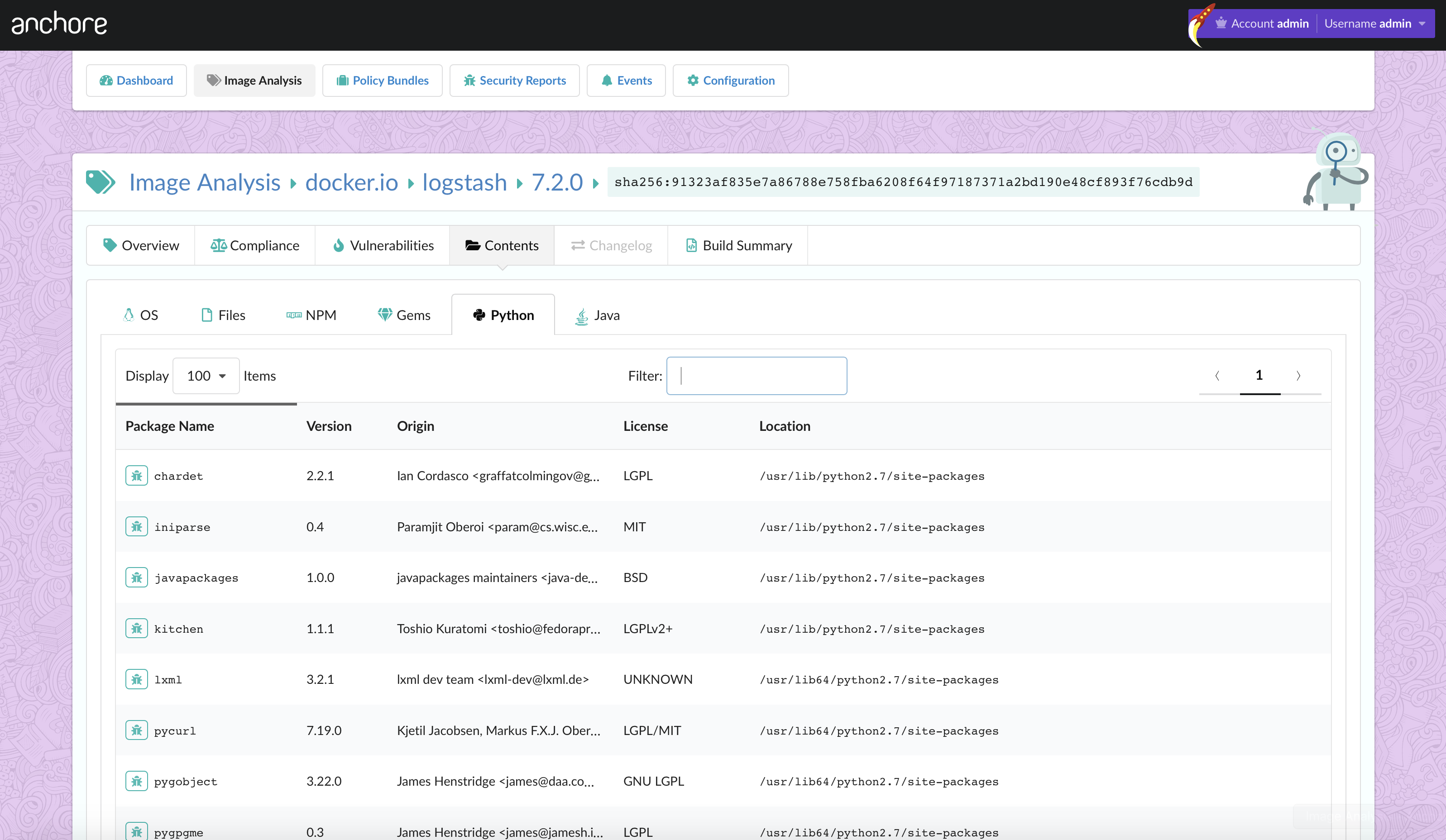

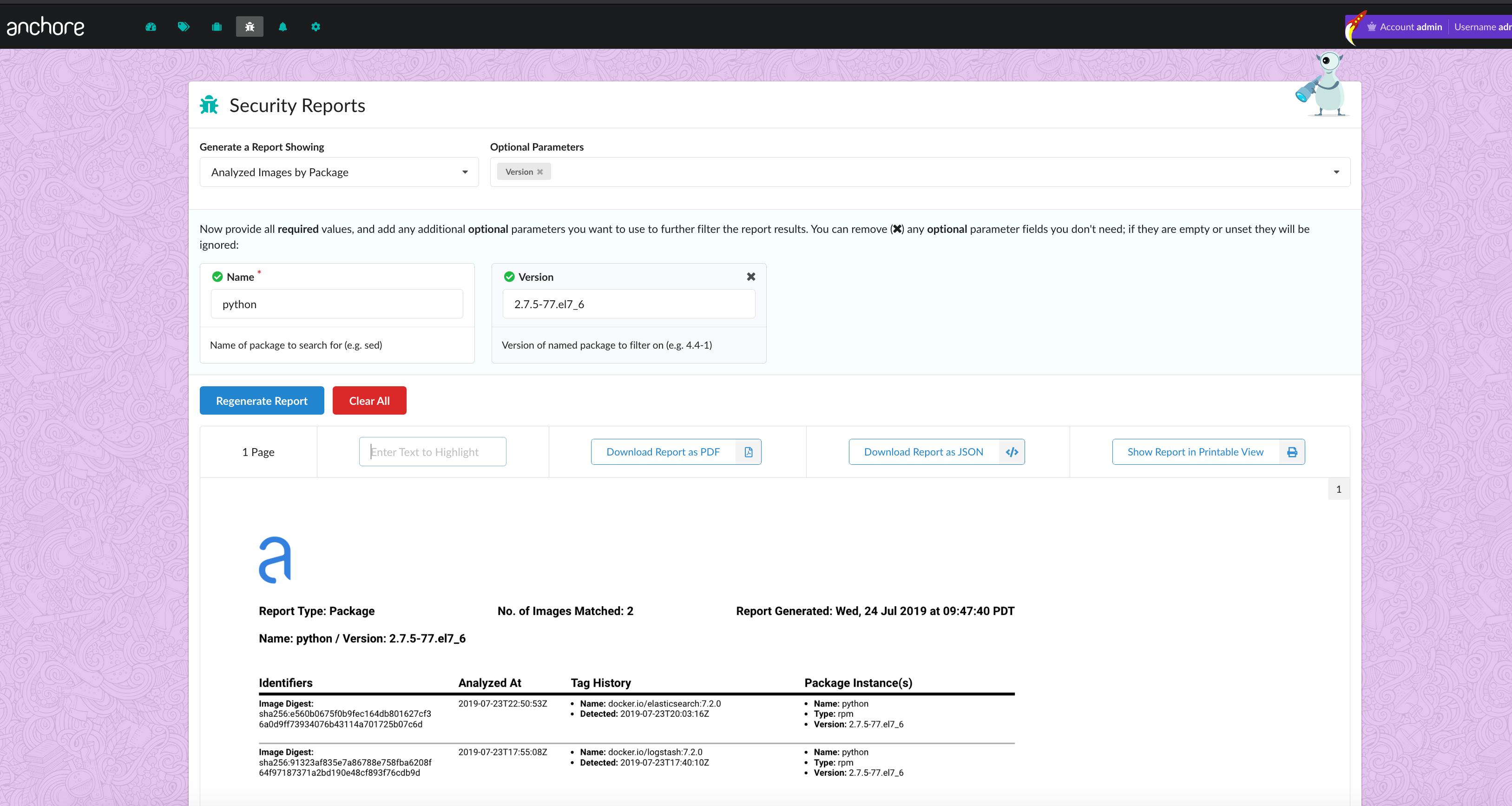

Knowing exactly what software pieces you have is super important for keeping things secure and running smoothly. Imagine you need to find every place you’re using “easyjson.” Could you do it quickly? Probably not easily, right? Today’s software has a lot of hidden parts and pieces. If you don’t have a clear inventory of all those pieces, a software bill of materials (SBOM), finding something like easyjson will take forever and you might miss something. If there’s a security problem with easyjson, that delay in finding it can cause serious trouble and make you vulnerable. Being able to quickly find and fix these kinds of issues is important when most of our software is open source.

The issue of open source sovereignty introduces complex challenges in today’s interconnected world. If organizations and governments decide to prioritize understanding the origins of their open source dependencies, they immediately encounter a fundamental question: which countries warrant the most scrutiny? Establishing such a list risks geopolitical bias and may not accurately reflect the actual threat landscape. Furthermore, the practicalities of tracking the geographical origins of open source contributions are significant. Developers and maintainers operate globally, and attributing code contributions to a specific nation-state is fraught with difficulty. IP address geolocation can be easily circumvented, and self-reported location data is unreliable, especially in the context of malicious actors who would intentionally falsify such information. This raises serious doubts about relying on geographical data for assessing open source security risks. It necessitates exploring alternative or supplementary methods for ensuring the integrity and trustworthiness of the open source software supply chain, methods that move beyond simplistic notions of national origin.

For a long time, we’ve kind of just trusted open source stuff without really checking it out. Organizations grab these components and throw them into their systems, and so far that’s mostly worked. Things are changing though. People are getting more worried about vulnerabilities, and there are new rules coming out, like the Cyber Resilience Act, that are going to make us be more careful with software. We’re probably going to have to check things out before we use them, keep an eye on them for security issues, and update them regularly. Basically, just assuming everything’s fine isn’t going to cut it anymore. We need to start being a lot more aware of security. This means organizations are going to have to learn new ways to work and change how they do things to make sure their software is safe and follows the rules.

Wrapping up

The origin of easyjson being traced back to a Russian company raises a valid point about the perception and utilization of open source software. While the geographical roots of a project don’t inherently signify malicious intent, this instance serves as a potent reminder that open source is not simply “free stuff” devoid of obligations for its users. The responsibility for ensuring the security and trustworthiness of the software we integrate into our projects lies squarely with those who build and deploy it.

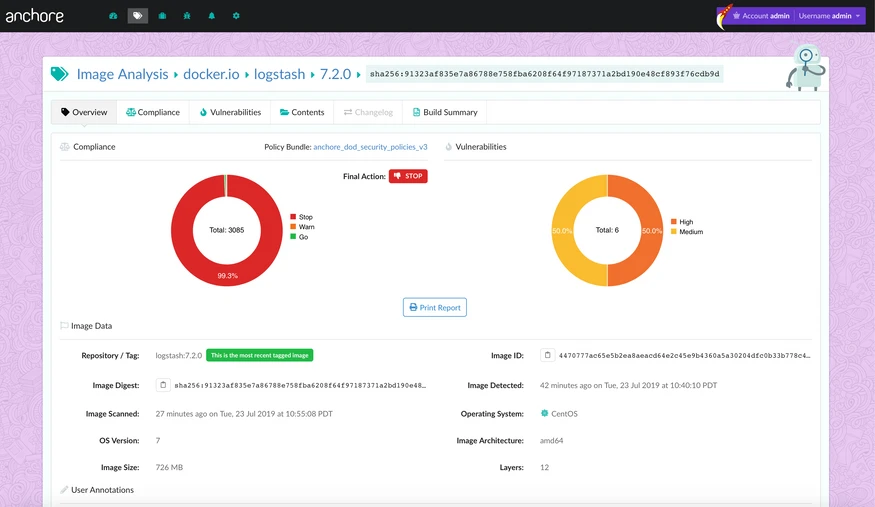

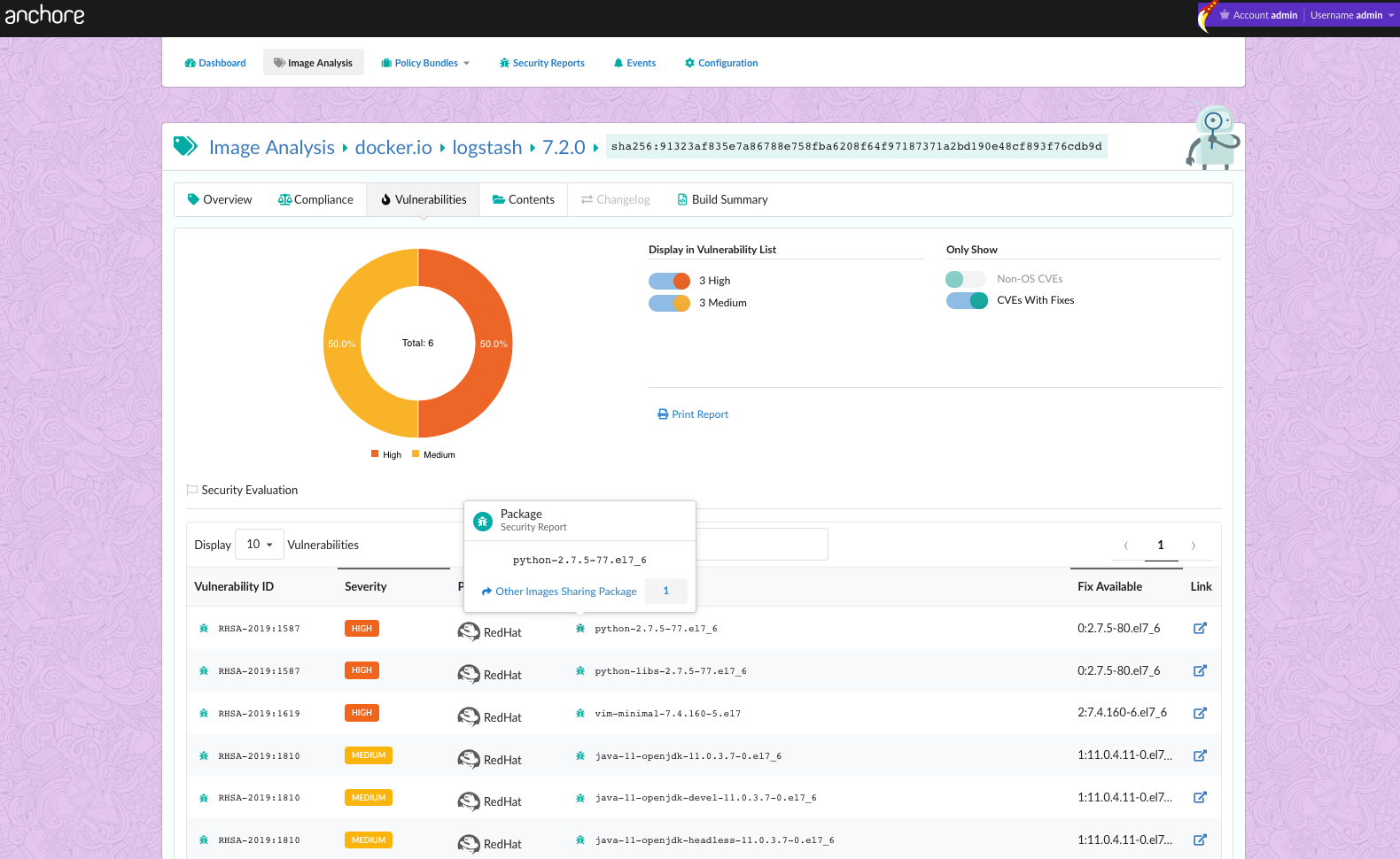

Anchore has two tools, Syft and Grype that can help us take responsibility for the open source software we use. Syft can generate SBOMs, making sure we know what we have. Then we can use Grype to scan those SBOMs for vulnerabilities, making sure our software isn’t an actual threat to our environments. When a backdoor is found in an open source package, like XZ Utils, Grype will light up like a Christmas tree letting you know there’s a problem.

The EU Cyber Resilience Act (CRA) shifts this burden of responsibility onto software builders. This approach acknowledges the practical limitations of expecting individual open source developers, who often contribute their time and effort voluntarily, to shoulder the comprehensive security and maintenance demands of widespread software usage. Instead of relying on the goodwill and diligence of unpaid contributors to conduct our due diligence, the CRA framework encourages a more proactive and accountable stance from the entities that commercially benefit from and distribute software, including open source components.

This shift in perspective is crucial for the long-term health and security of the software ecosystem. It fosters a culture of proactive risk assessment, thorough vetting of dependencies, and ongoing monitoring for vulnerabilities. By recognizing open source as a valuable resource that still requires careful consideration and due diligence, rather than a perpetually free and inherently secure commodity, we can collectively contribute to a more resilient and trustworthy digital landscape. The focus should be on building secure systems by responsibly integrating open source components, rather than expecting the open source community to single-handedly guarantee the security of every project that utilizes their code.